Difference between revisions of "AWS S3 VPC Flow Log Access"

| Line 63: | Line 63: | ||

<br /> | <br /> | ||

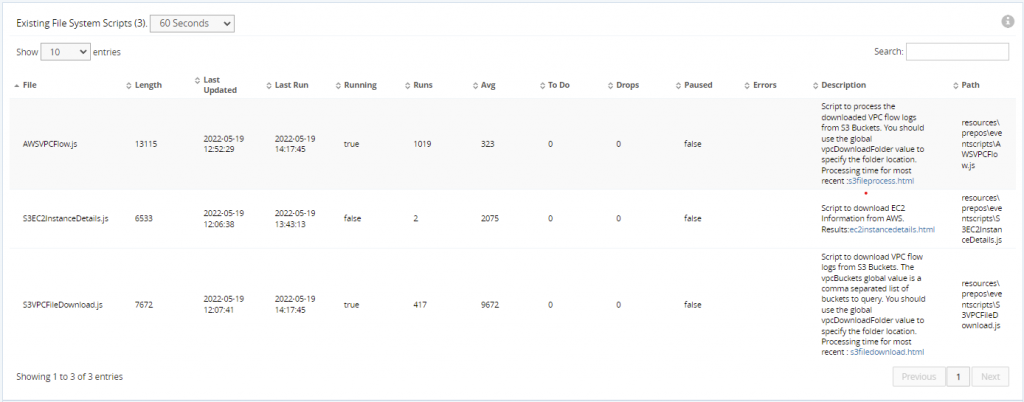

After you copy these scripts, they will be visible on the <b>GigaFlow Event Scripts</b> page and you can use this page to monitor their status. | After you copy these scripts, they will be visible on the <b>GigaFlow Event Scripts</b> page and you can use this page to monitor their status. | ||

| − | [[File:Gigaflow event scripts.png| | + | [[File:Gigaflow event scripts.png|1024px|frameless|left]] |

| − | + | ||

| − | + | ||

Revision as of 16:57, 26 August 2022

Observer Gigaflow uses the Amazon Web Services (AWS) Command Line Interface (CLI) tools to access AWS services. You can install the latest version of the CLI tools for AWS system from https://aws.amazon.com/cli/.

| Note: The CLI tools must be configured with the same user used to run Gigaflow for it to be able to access the configuration profile. For Linux, you can use the "su" command to choose the correct user and then run the AWS CLI commands. |

After installation, perform the following steps:

1. Add a role to your AWS instance with the following permissions:

- S3 List/Read/Download

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"s3:Get*",

"s3:List*",

"s3-object-lambda:Get*",

"s3-object-lambda:List*"

],

"Resource": "*"

}

]

}

- EC2 List/Read/Describe

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": "ec2:Describe*",

"Resource": "*"

}

]

}

2. Generate an Access Key ID and password and add them to your AWS CLI configuration (see https://docs.aws.amazon.com/cli/latest/userguide/cli-configure-quickstart.html).

3. To test your configuration, run the following commands from the CLI:

aws ec2 describe-instances

aws s3 ls s3://

aws ec2 describe-flow-logs

GigaFlow uses the below 3 scripts. You must copy them from the GigaFlow\resources\docs\eventsscripts\aws\ folder to the GigaFlow\resources\prepos\eventscripts folder to populate via the AWS VPC flow logs.

| File | Use | Event Script Attributes | Output File |

|---|---|---|---|

| AWSVPCFlow.js | Processes the downloaded VPC flow logs and ingests the data into the GigaFlow database. | vpcDownloadFolder: The folder with the downloaded flow logs (same as S3VPCFileDownload.js uses) | /static/s3fileprocess.html |

| S3EC2InstanceDetails.js | Gathers instance and interface information from AWS and uses it to add attributes to the created devices so that they can be searched on and displayed as appropriate. | /static/ec2instancedetails.html | |

| S3VPCFileDownload.js | Downloads the most recent VPC flow logs from one or more S3 buckets. | vpcBuckets: The folder to download flow logs to (same as AWSVPCFlow.js uses) | /static/s3filedownload.html |

After you copy these scripts, they will be visible on the GigaFlow Event Scripts page and you can use this page to monitor their status.